All articles

AI Can Produce The Creative, But Consumers Still Want The Human Version

Greg Shumchenia, VP of Brand Strategy at Kinder’s, is examining how AI-generated advertising may reshape consumer trust and long-term brand equity.

It’s not a quality signal, it’s a consent signal. AI can already clear the bar for making a good ad. The real question is whether people actually want to see it, and right now they’re telling us they don’t.

The advertising industry has spent the last two years racing to understand how far generative AI can push creative production. Agencies and marketing teams are rapidly testing the technology across everything from copywriting and image generation to media planning and large-scale content workflows, often focusing on how quickly AI can produce polished work. In many cases, the creative itself is becoming technically "good enough" to pass internal reviews and perform reasonably well in testing environments. But amid the industry excitement around efficiency and scale, a more fundamental question is starting to emerge: whether consumers actually want to engage with advertising that feels increasingly synthetic in the first place.

Greg Shumchenia, VP of Brand Strategy at Kinder's, has spent much of his career navigating the evolving relationship between technology, creativity, and long-term brand building. A two-time Ad Age A-List honoree, Shumchenia previously led global brand and strategy work at Intuit Mailchimp, where his teams actively integrated AI into marketing workflows and creative operations. Now he is examining a different side of the conversation: not simply how efficiently AI can produce content, but how synthetic media may shape consumer trust, brand perception, and emotional connection over time.

"It’s not a quality signal, it’s a consent signal. AI can already clear the bar for making a good ad. The real question is whether people actually want to see it, and right now they’re telling us they don’t," says Shumchenia. Recent consumer feedback suggests that skepticism around AI-generated advertising remains widespread, even as the underlying creative quality improves. Surveys surrounding this year’s Super Bowl advertising cycle found that just 17% of respondents wanted to see more AI-generated advertising, suggesting the overwhelming majority of consumers still are not embracing the format. For Shumchenia, many ad-testing environments overlook an important factor entirely: how consumer perception changes once people understand how the creative was actually made.

The pyramid problem

The challenge extends far beyond whether an AI-generated ad looks convincing on the surface. Consumer reactions can shift dramatically once audiences realize synthetic tools were involved, particularly when brands appear vague or evasive about the process behind the work. As generative content becomes easier to disguise as traditional commercial production, the larger risk is not necessarily a single backlash moment, but the gradual weakening of consumer trust over time. "Building brand equity is like building a brick wall brick by brick. It's one story and one interaction at a time," Shumchenia explains. "A bad PR day won't just knock a bunch of those bricks down, it can slowly degrade the size and the quality of your bricks. It can have the opposite effect of building."

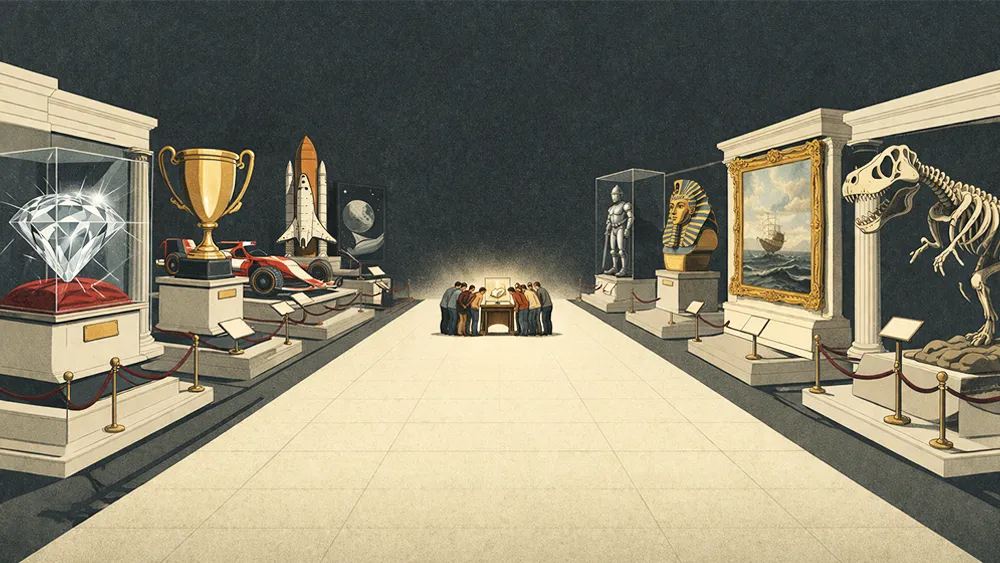

Recent backlash surrounding SVEDKA’s Super Bowl campaign illustrated how quickly audience perception can change once consumers begin questioning the human involvement behind a piece of creative work. The criticism was not simply about the use of AI itself, but about the growing disconnect between a brand’s emotional positioning and the perceived absence of human craft behind the execution. Shumchenia believes the emotional impact of advertising can weaken once audiences realize a piece was generated primarily through automated systems rather than human expression. "Once you know that something is AI generated, it might have fooled you into thinking it was human generated, but once you know, the goodness of that kind of melts away. Think about the Egyptian pyramids. If you learned that those were just AI generated and assembled by robots, they stop being impressive. It stops feeling like art."

An artisanal advantage

To see where this is heading, Shumchenia advises to take a look at the grocery aisle. Industrial and mass-produced food were initially embraced for their convenience. Over time, a premium emerged around "real" ingredients and visible craft. "When mass production food hit in the mid-20th century, consumers welcomed that convenience. Just three generations later, the pendulum has swung back to handmade, artisanal, small batch, organic, and local. Those are all premium positionings in the food category now."

Shumchenia expects a similar arc will play out with creative work. As AI-generated content becomes more common, audiences may start looking for signals of human effort, especially in the brands they care about most. "I could see AI kind of becoming the new canned food or Styrofoam. Both of those things were incredible innovations at the time, and they were embraced by most people, but freshness, quality, and sustainability eventually trumped convenience and affordability," he says. "We still use canned food and Styrofoam, but usually very selectively and begrudgingly. We certainly don't invite guests over for dinner and dump a can of food out onto a Styrofoam plate."

The more sustainable approach may come down to where brands decide AI belongs in the creative process. Shumchenia believes practical internal uses, like analyzing consumer feedback, identifying patterns, or prototyping campaign concepts, can help teams move faster without fundamentally changing the audience experience. The tension tends to increase once AI-generated material moves into consumer-facing creative, where audiences begin evaluating not just the message itself, but the human involvement behind it. "I think it's very important to watch that line where the work starts to become consumer-facing. That's where we cut AI out, usually," he explains. "We say, okay, great, we've used it to prototype this or get buy-in from the organization on what we want to do. Now let's get humans to really make it."

When cost cutting gets costly

Beyond the reputational concerns, the economic case for generative AI may be far less straightforward than many marketers initially assumed. Similar conversations are already emerging in software development, where businesses experimenting with AI-assisted coding are beginning to encounter the operational expense tied to constant iteration. "People are beginning to replace coders with Claude Code and similar AI tools, but there are conversations around the actual costs of that AI to a business," Shumchenia says. "It's usually bought as tokens or in subscription fees for LLMs. Those costs are starting to get really high to have to constantly ask AI to iterate or redo work that wasn't exactly what you needed."

Rather than making stronger creative decisions upfront, some brands are leaning on AI to generate massive numbers of ad variations in hopes that performance testing will eventually surface a winner. Shumchenia believes that approach risks becoming a false economy, where the pursuit of cheap scale ultimately costs more than investing in fewer, more thoughtful campaigns led by experienced creative teams. "You're going to end up paying more than it would have cost to pay an experienced, talented human team to strategize, concept, and build five ads that you could use all year, instead of asking AI to produce thousands to find out which one works." As the industry continues experimenting with where AI belongs inside marketing workflows, he expects plenty of missteps along the way. "We're going to fail really fast," Shumchenia concludes. "It's inevitable, but it's all about risk mitigation. I'm trying to fail the least."